TikTok says it has removed content and banned accounts after a BBC investigation highlighted a cluster of AI-generated profiles using sexualised black female avatars to steer users towards explicit material. That may sound like a niche moderation story, but it is actually a useful snapshot of where the internet is heading for everyone else.

The BBC, working with researchers from the independent publication Riddance, identified dozens of accounts across TikTok and Instagram using highly stylised AI-generated women, often with exaggerated features and racialised language. In some cases the accounts were not clearly labelled as AI-made. In one especially unsettling example, a real creator’s videos were reportedly altered so that an artificial face was placed over her body, then reposted at far greater scale than the original.

What makes this worth ordinary readers’ attention is not just the ugliness of the content. It is the way AI can now mass-produce online identities that look believable enough to attract followers, likes and clicks before most people stop to ask what they are really looking at.

Why this is bigger than one platform clean-up

According to the BBC report, one of the accounts built around an artificial persona picked up millions of followers within weeks. That tells you something important. We are moving into an online environment where a “person” can be assembled from generated images, borrowed video clips, a bit of platform savvy and a link tree leading somewhere more commercial. The account does not need to be real in any meaningful sense to work.

That matters because social platforms train us to trust familiar patterns. A polished profile picture, regular uploads, thirsty comments and a big follower count can create a quick sense that an account is authentic, or at least ordinary. AI makes that impression cheaper to manufacture. In this case, the result was not harmless experimentation. It appears to have mixed deception, sexual exploitation and racist caricature into one ugly package.

There is also a basic fairness problem here. If a real person’s clips can be lifted, altered and scaled up without proper consent, the line between “creative remix” and simple theft disappears fast. That overlaps with the wider question of likeness and identity that we touched on in our recent piece on Luke Littler and AI fakes. Famous people may have more lawyers and better-known faces, but ordinary users are not magically protected from the same underlying tools.

What TikTok’s own rules say

TikTok’s current Integrity and Authenticity guidelines say creators must label AI-generated or significantly edited content when it shows realistic-looking people or scenes. The company also says it does not allow the use of private people’s likenesses without consent, and specifically bans sexualised or fetishised depictions in that section of its rules. In a separate newsroom update, TikTok says it has already labelled more than 1.3 billion videos using a mix of creator disclosure, detection systems and embedded credentials.

In other words, the platform’s written rules already recognise the problem. The awkward bit is enforcement. The BBC reported that TikTok acted after being approached for comment and later said it had removed content and banned accounts that broke its rules. That is better than shrugging, but it is also a reminder that harmful material can travel a very long way before the clean-up arrives.

What ordinary users should take from this

The first takeaway is that “looks real” is becoming a weaker and weaker test online. If you or your family spend time on TikTok, Instagram or similar apps, it is worth assuming that some apparently human creators are partly synthetic, heavily edited or entirely fabricated. That does not mean every polished video is fake. It means visual confidence is no longer the same thing as authenticity.

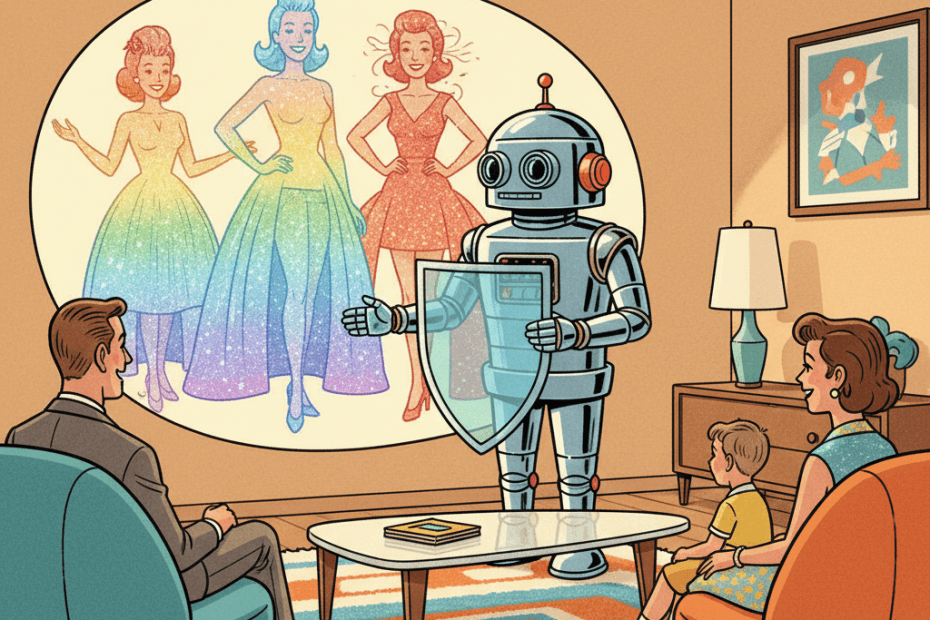

The second is that labelling matters more than platforms sometimes admit. Clear labels do not solve everything, but they give viewers a fighting chance to understand what they are seeing. We made a similar point in our article on AI safety labels: when software makes content more convincing, the burden should not fall entirely on users to spot the seams.

The third is that AI abuse often arrives wrapped in something familiar. It may look like an influencer account, a fan page, a funny clip or a glamorous profile. Underneath, it can be doing something much grimmer: stealing from real people, pushing viewers towards scams or explicit sites, or reinforcing nasty stereotypes because that happens to drive engagement. The technology is new; the incentives are not.

The reassuring part

The reassuring part is that this is not a story about unstoppable magic. It is a story about platform rules, moderation choices and whether companies are willing to enforce the standards they already publish. TikTok has now signalled that at least some of these accounts crossed a line. That matters, because it shows synthetic content does not have to be treated as an ungovernable force of nature.

Still, this episode is a good reminder to be a bit more sceptical online without becoming paranoid. AI is making fake personas easier to build, easier to scale and easier to monetise. Ordinary users do not need to become forensic analysts. But we probably do need to get more comfortable with a simple habit: if an account seems oddly polished, oddly repetitive or oddly detached from normal human behaviour, there may be less reality there than the screen suggests.

Sources:

BBC News — AI videos of sexualised black women removed from TikTok after BBC investigation

TikTok Community Guidelines — Integrity and Authenticity

TikTok Newsroom — More ways to spot, shape and understand AI-generated content